On a normal summer day in June, Manny Guerrero, a soft-spoken Vietnam War veteran living in Las Vegas, picked up the phone and was told he had beaten the odds and won. The voice on the other end claimed to be from Publishers Clearing House and quickly painted a vivid picture: a check for $3,500,000, a new 2024 BMW XM SUV, balloons, and even a big party delivered to his front door if he’d like. All he had to do was cover the taxes up front.1

None of this sounded entirely unreasonable or implausible. After all, when Manny was looking for something to take his mind off the death of his wife in 2008, he started ordering items from Publishers Clearing House, hoping he could win money by being entered in their sweepstakes. So, Manny leaned in. He started writing checks and wiring money: $22,000, $30,000, $40,000 at a time. Before he knew it, $180,000 was gone.

Manny hadn’t told anyone about his win, not even his daughters. He planned to surprise them. Manny intended to use his winnings to fix up his house and leave behind an inheritance for his children. Instead, his daughters received a different kind of surprise when an FBI agent called to inform them that their father had been the victim of a scam.

Shortly after, Manny’s daughter, Debbie, asked his credit union why no red flags were raised when her father started making large withdrawals repeatedly—and why she and her sister, who were both listed on the account, were not at least notified. In response, the credit union simply told her that the money was her father’s, and he was free to do what he wanted. With no way to recoup the lost money, Manny ultimately resorted to taking out a $25,000 loan to stay afloat.

Manny’s story would be tragic even if it were rare. But unfortunately, it’s not. According to the Pew Research Center, 75 percent of American adults, across age groups, have experienced some kind of online scam or attack.2

To make matters worse, scammers are increasingly targeting American veterans. As of June 2025, nearly nine in ten servicemembers and veterans have been targeted by a military service-related scam over the past twelve months, according to data from the American Association of Retired Persons (AARP).3 American seniors are also a favorite target for scammers, with the FBI’s Internet Crime Complaint Center receiving 147,127 fraud complaints from individuals over sixty in 2024, which constitutes a 46 percent increase from 2023.4 While these numbers may seem overwhelming in and of themselves, they only reflect the losses that we know about: a growing number of victims opt to forgo reporting their experiences altogether due to shame, a fear of blame or disbelief, and the high difficulty of successfully prosecuting scams that often cross legal jurisdictions.5

All of this reinforces how what happened to Manny wasn’t just the result of misfortune, one bad actor, or a single moment of poor judgment. It was the product of a well-oiled machine, one that successfully blends breached data, social engineering, and minimal institutional friction to extract money at scale. For every Manny, there are thousands more, each targeted through tailored scripts designed to exploit people when they are vulnerable.

Treating stories like Manny’s as though they are isolated incidents misses the broader reality. Fraud and scams are a growing national crisis: one that has been shaped by our data practices; evolved alongside our emerging technologies; and reinforced by the limits of our current oversight, enforcement, and response systems.

To understand the scale of what we are all up against, and to determine what meaningful action looks like, we need to look more closely at how we got here and what today’s threat landscape really looks like.

The Origins and Features of Today’s Threat Landscape

The terms fraud and scam are frequently used interchangeably. While both terms are often used correctly to describe a scenario where a victim loses money under false pretenses, each term actually refers to a different strategy used by bad actors.

Financial fraud generally refers to dishonest or deceptive methods used to acquire information or cause financial harm, such as identity theft, SIM swapping, or payment card fraud.6 In contrast, scams are usually schemes designed to trick individuals into willingly providing money or sensitive information.7 Many attackers use social engineering in their scams, which is the tactic of manipulating, influencing, or deceiving a victim to gain control over a computer system, or to steal personal and financial information.8 For example, in romance scams, a bad actor will create fake profiles on dating websites or apps, form a relationship with a target to build trust by communicating regularly, and then eventually start asking for money.9 Bad actors may say they need the money to travel to meet their target in person for the first time or because an emergency has occurred. They may also initiate contact outside of dating platforms through popular social media platforms like Instagram or Facebook. Regardless of what a romance scam looks like, the outcome is generally the same: a broken heart, lost time, and stolen money. Other types of scams that have grown in popularity are employment scams, charity scams, and the sale of nonexistent goods or services scams, among others.10

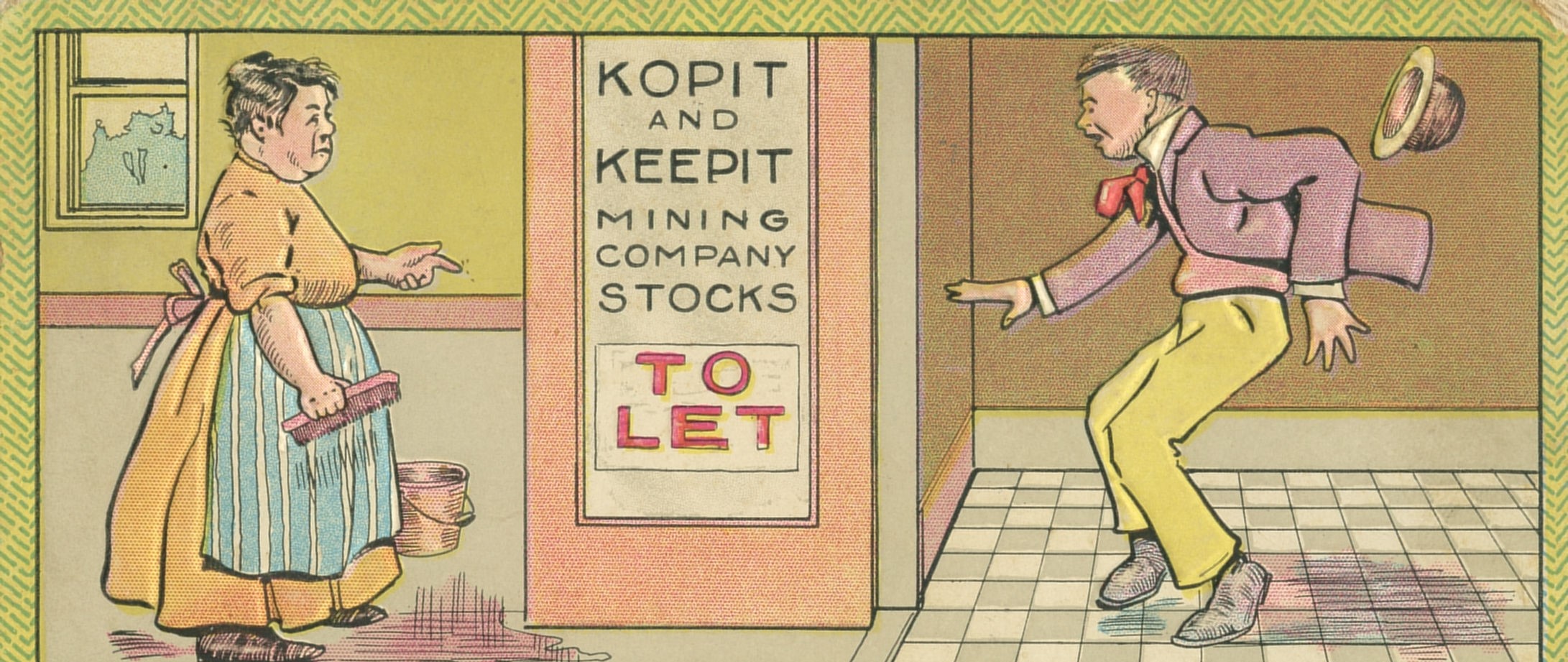

The scam is a recurring phenomenon throughout American history. In the 1840s, a sharply dressed New Yorker named William Thompson roamed the streets striking up friendly conversations with strangers he passed by.11 After establishing rapport, Thompson would ask whether they had “confidence” in him, just enough to entrust him with their watch or other valuables until the next day. While most declined, some agreed and Thompson would never return their valuables.12 Thompson’s tactics earned him the moniker “the Confidence Man,” a term popularized by reporter James Houston in the New-York Herald in 1849, and the origin of what we now more readily recognize today as the “con man.”.13 Though Thompson was eventually arrested and mocked by his contemporaries for the clumsiness of his tactics, the case did reveal an enduring truth: successful scams exploit trust and often rely on creating a sense of urgency or pressure, so that the target feels cornered into making a decision swiftly.

Throughout history, fraud and scams were relatively hit or miss, constrained by friction. Physical proximity, slower communication, and limited reach imposed natural barriers on scale and repetition. Schemes required face-to-face interaction, handwritten correspondence, or extended coordination—conditions that carried high time costs and personal risk. Fraud and scams existed, but it was episodic rather than industrial.

Those constraints eroded as society digitized. As banking, commerce, and government services moved online, so did fraud and scams, not because deception itself evolved, but because the environment did. Each wave of digital transformation expanded access to money and information while reducing the effort required to exploit both. The gradual shift from mail-based communication to electronic transactions and online accounts enabled fraud and scams to occur around the clock, across institutions, and eventually, even across the borders of nation-states.14 At the same time, the growth of large-scale data creation and collection—through public records, commercial intermediaries, and data breaches—made it easier for bad actors to identify, profile, and target potential victims with specificity.15

In other words, the difference in today’s threat landscape lies in the information (data) and tools (technologies) now available to those conducting these schemes. What once required postage stamps or live callers can now be scaled to millions with a single script or email blast.16

Today, financial fraud and scams operate largely in digital spaces through robocalls, phishing emails, smishing texts, spoofed websites, and social media outreach.17 With vast amounts of personal information accessible online, bad actors can also target victims with unprecedented precision.18 Automation and artificial intelligence (AI) have lowered the cost of execution, enabling schemes to spread faster and appear more convincing.19 In some regions, scam operations have even become a full-fledged industry. Organized crime groups in Myanmar, Cambodia, and Laos have taken advantage of ongoing political instability to run large-scale cyber scam businesses.20 These scam centers are often staffed with trafficked labor and used to target victims around the world with fraudulent investment pitches and impersonation schemes. According to the FBI, Americans lost an estimated $2.6 billion to scams like these in 2022 alone.21

Governments around the world have already begun to treat cyber scam operations not only as criminal enterprises, but as national security threats. In recent years, China and Thailand have led repatriation efforts targeting the traffickers and operators behind scam centers.22 Meanwhile, the United States, Canada, and the United Kingdom have issued coordinated sanctions and ramped up cryptocurrency investigations and asset seizures in an effort to disrupt financial flows.23 While these actions have yielded some high-profile successes, experts caution that such victories remain uneven and often short-lived. As long as the incentives remain high and enforcement remains fragmented, scam operations are likely to stay highly mobile, technologically adaptive, and capable of scattering across legal jurisdictions to evade accountability, evolving faster and with greater ease than the systems trying to stop them.

How Scammers and Fraudsters Think, Target, and Profit

Not every fraudster or scammer is committing cybercrime by choice. As illustrated in the previous section, some of the people sending phishing texts are victims themselves, trafficked into scam compounds and forced to work under constant threat of violence. Their “motive,” if it can even be called that, is survival.

But for others, cybercrime is not only a choice, it is a profession. Some scammers operate independently, while others function as affiliates or contractors within larger syndicates. These criminal networks often resemble legitimate businesses, complete with specialized roles, performance targets, structured workdays, and even paid time off.24 Some cybercriminals focus on direct engagement with targets through impersonation or romance scams. Others build and sell tools or offer services like malware kits, fake landing pages, stolen credentials, distributed denial of service or DDoS-for-hire, and money laundering, to lower the barrier of entry for less sophisticated threat actors.25 In each case, the goal is the same: scale the operation, maximize success, and minimize risk.

Success, in turn, depends on access to data. Personal information scraped from public records, harvested in data breaches, or purchased on the dark web helps scammers profile potential victims and tailor their approach.26 A leaked email might be used for phishing. A public Facebook or LinkedIn post could offer valuable insight into an individual’s goals, interests, or life events that make someone more emotionally sensitive to psychological urgency. Something as simple as a birthday could help attackers bypass initial security questions aimed to verify an individual’s identity when they expand the scope of their targeting to other accounts owned by the individual. All of this helps attackers improve the precision of their targeting and increase the success rate of their operations.

Sometimes, this targeting isn’t just precise, it’s refined over time.27 In multiple federal cases in the United States, data brokers were caught knowingly selling lists to scammers and incorporating scammer feedback into their models.28 One firm, Epsilon Data Management, provided data on more than 30 million Americans to scammers over nearly a decade.29 Others, like Macromark and KBM Group, reportedly went as far as partnering with scammers to improve profiling algorithms—turning revictimization into a lucrative business model.30

Once a victim engages, monetization can happen in a few different ways.31 Some targets are coaxed into sending wire transfers, gift cards, or cryptocurrency. Others have their credentials harvested, their bank or benefits accounts drained, or their identities stolen. Stolen data is often repackaged and resold, fueling the next wave of scams.32 Once laundered, the profits can be reinvested into upgrading existing infrastructure or hiring more personnel.

Nation-state threat actors have also turned to financially motivated cybercrime. In some cases, these campaigns are explicitly designed to generate revenue. For example, North Korean operatives routinely compromise crypto wallets and exchanges to funnel revenue toward the regime’s weapons development and sanction evasion efforts.33 In other cases, cybercrime enables broader state objectives. Chinese espionage groups have reportedly supplemented their campaigns by monetizing stolen data, while Russia has turned to criminal actors to support its war in Ukraine.34 As the lines continue to blur, cybercrime is increasingly both a revenue stream and an operational enabler for advanced persistent state-backed threats, supplementing broader campaigns of espionage, disruption, and influence.

Where Law Enforcement Falls Short

When someone realizes they have been defrauded or scammed, the guidance they receive is often centralized and procedural, particularly in the first twenty-four to seventy-two hours. Victims are encouraged to stop communicating with the fraudster or scammer, immediately notify their bank, account manager, or payment provider, secure compromised and vulnerable accounts, reset passwords, and document what happened to the best of their ability.35 Many are also advised to file reports with the Federal Trade Commission or the FBI’s Internet Crime Complaint Center, and in some cases, to contact local law enforcement or a state attorney general’s office to formally report the incident.36

These recommended best practices are well intentioned and can sometimes materially influence how widespread the damage becomes. Acting quickly can help prevent additional breaches and monetary losses, preserve evidence, and alert financial institutions to suspicious activity. This guidance also reflects how fraud and scams are handled today across banks, technology companies, and law enforcement agencies at the local, state, and federal levels. For victims navigating shock, confusion, and urgency, these steps offer a sense of direction in a moment when the rug has been pulled out from underneath them, often without warning or ceremony.

At the same time, this default guidance often reveals the limits of the system victims are being asked to rely on. The sobering reality is that once funds are transferred, especially through wire services, gift cards, cryptocurrency, or accounts routed overseas, recovery is rare.37 Local police departments, while often the first point of contact, typically lack the jurisdiction or technical capacity to pursue cases that cross state or national boundaries.38 State legislative offices may receive complaints or requests for assistance from constituents, yet face similar constraints when suspected perpetrators and payment pathways extend far beyond their scope of authority.39 Even at the federal level, agencies contend with overwhelming caseloads, fragmented data, and limited resources to trace funds that move rapidly between bad actors and across platforms or borders.40

The challenge is further compounded by attribution: perpetrators frequently obfuscate their identities, operate across jurisdictions, and rapidly change tactics, meaning investigative leads can go cold as techniques evolve in days or weeks rather than months or years.41 In practice, many reports function primarily to document harm and raise awareness rather than to reverse losses or deliver substantive resolution.42

Over time, this dynamic can discourage reporting altogether, reinforcing undercounting and weakening the data that institutions, policymakers, and law enforcement rely on to understand emerging threats.43 As these experiences compound, trust in digital communications, financial systems, and public institutions begins to erode, making everyday interactions and decisions more fraught and defensive.44

None of this reflects a lack of concern or effort from law enforcement, public officials, or industry. Rather, it underscores a fundamental mismatch between the scale and speed of modern scams and the systems available to address them. Fraud and scams today are digital, distributed, and transnational. Enforcement, by contrast, remains largely reactive, jurisdiction-bound, and resource-constrained. Attribution is difficult, recovery is unlikely, and accountability often lags behind innovation.

The result is a response framework that is forced to prioritize damage control over meaningful remediation. For victims, that gap can feel like resignation. For institutions, it exposes structural limitations. Taken together, these constraints help explain why so many Americans feel that even when they do everything they are told to do, the outcome still falls short.

Where Policy Can Move the Needle

Encouragingly, combatting financial fraud and scams has emerged as a rare area of bipartisan focus, driven by the growing recognition that these crimes do not discriminate by income, geography, or political affiliation—and that, left unaddressed, they not only harm individual Americans but also threaten our national security.

At the federal level, recent legislative proposals reflect a clearer understanding of both the scale of the crisis and the structural weaknesses in the current responses and resources available to Americans. Senators Mike Crapo (R-Idaho) and Mark Warner (D-Virginia), joined by Jerry Moran (R-Kansas) and Raphael Warnock (D-Georgia), introduced the Task Force for Recognizing and Averting Payment Scams (TRAPS) Act in June 2025 to create a standing interagency body spanning the Treasury Department, Consumer Financial Protection Bureau, Federal Communications Commission, Federal Trade Commission, Department of Justice, prudential regulators, and industry representatives.45 Rather than imposing new compliance requirements, the task force model focuses on coordination: clarifying institutional roles, improving information sharing, and identifying gaps that might prevent agencies from acting effectively in concert.46 In doing so, the TRAPS Act has the potential to provide federal leadership and strategic direction across agencies that too often operate in silos rather than as a team toward a shared mission.

Complementing this approach, the Guarding Unprotected Aging Retirees from Deception (GUARD) Act, emphasizes capacity-building over rulemaking.47 Introduced on a bipartisan basis by Representatives Zach Nunn (R-Iowa), Scott Fitzgerald (R-Wisconsin), and Josh Gottheimer (D-New Jersey) in April 2025, the proposed bill would expand permissible uses of existing Department of Justice grant funding, allowing state, local, tribal, and federal law enforcement agencies to invest in hiring more investigative staff, modern forensic tooling such as blockchain analytics, and cross-jurisdictional coordination.48 By focusing on practical enablement rather than rigid new mandates, the GUARD Act reflects a pragmatic understanding of where policy can most realistically improve outcomes in an environment defined by speed, scale, and cross-border complexity.

Still, until legislative measures such as the TRAPS Act and the GUARD Act are enacted—and in the absence of a coherent national strategy—industry has largely been left to shoulder the operational burden of response. Financial institutions, in particular, have strong incentives to protect their consumers from falling victim to frauds and scams and have invested heavily in education campaigns, enhanced authentication, behavioral monitoring, transaction delay mechanisms, and dedicated fraud response teams responsible for identifying and interrupting suspicious activity earlier in the transaction lifecycle.49 These efforts reflect years of learning under constant pressure from evolving scam tactics and shifting consumer behavior.

Some policy debates, however, risk misdiagnosing where responsibility and leverage most effectively reside.50 Financial institutions are often treated as the natural locus of liability because they handle the movement of funds. In reality though, banks are more often the final destination in a much longer chain of deception that originates elsewhere—on social media platforms, over telecommunications networks, through spoofed identities, and via sustained psychological manipulation or emotional appeal that occurs entirely outside the financial system.51

Policy approaches that default to liability shifting or mandatory reimbursement for consumer-authorized transactions may be well intentioned, but they focus narrowly on the endpoint of a scam, leaving the upstream ecosystem largely intact. Over time, that can narrow the menu of viable defenses by pushing institutions toward compliance-driven controls rather than toward proactive prevention, early intervention investments, and ongoing adaptive solutions that actually reduce harm.52 When incentives tilt in this direction, collaboration, initiative, and defense ultimately suffer, even as fraud and scam vectors themselves continue to evolve.

The Role of Emerging Technologies

Beyond clearer guidance, better coordination, and capacity-building, effective policy must also recognize and support the role that emerging technologies increasingly play in combatting fraudsters and scammers. Although it can be tempting to view technology as the primary driver of fraud and scams, its responsible deployment remains one of the most effective tools for early detection, real-time response, and post-incident recovery.53

At the consumer level, artificial intelligence is already shifting fraud prevention from reactive clean-up to more timely intervention. Instead of relying primarily on spam filters or consumer reports, newer fraud prevention systems learn what “normal” looks like for an individual user and assess the severity of a potential risk when behavior meaningfully deviates from that baseline.54 Sudden changes in login activity, unusual payment requests, atypical transaction routing, or patterns inconsistent with prior behavior can trigger temporary account freezes.55 Moreover, on-device protections recently launched by major technology companies are designed to detect suspicious phone and text conversations in real time, alerting users when requests or questions resemble known scam tactics and advising them to pause or exit the conversation.56 While these brief interruptions may only last a few seconds, they can be enough to disrupt the urgency scammers rely on and give consumers the space to reconsider before funds or sensitive information are exchanged.57

At the enforcement and recovery level, AI-enabled forensic tools now allow investigators to sift through and correlate large volumes of transaction data, communications metadata, platform activity, and open-source intelligence to identify laundering patterns, coordinated networks, and shared infrastructure that would otherwise be difficult to discover manually.58 When paired with blockchain analytics, these capabilities have already enabled law enforcement to trace illicit flows and recover assets tied to large-scale cryptocurrency fraud—outcomes that until recently were widely viewed as a near impossible feat to accomplish.59

Yet even as defenses adapt, the threat landscape continues to move. While stakeholders are still adjusting to AI-enabled techniques, the next wave of fraud and scams is already taking shape.60 Agentic AI systems, for instance, are likely to introduce higher transaction volume and promise to shift more transactions to machine-to-machine execution, where intent, authorization, and accountability are harder to assess in real time.61 At the same time, these technologies also expand what is possible for defenders. If deployed responsibly, agentic AI can support continuous monitoring, real-time risk assessment, and rapid intervention as transaction volume and velocity increase.62 Quantum computing poses a longer-term challenge by threatening existing cryptographic safeguards, potentially lowering the cost of fraud if systems are unprepared, but it also holds promise for accelerating anomaly detection and pattern analysis across complex financial networks.63 Ultimately, the impact of emerging technologies will depend less on innovation itself than on policy choices that determine whether defenses can scale.

Moving Beyond the Crisis

Understanding how cybercriminals operate—who they are, how they think, and what makes them successful—should fundamentally shift how we respond. We cannot afford to treat these attacks as one-off crimes or blame victims for being targets.64 We must recognize that financial fraud and scams are part of a larger system of exploitation, built on unchecked data flows, growing technological sophistication, and institutional blind spots.

While law enforcement and regulation remain essential, on their own they are not equipped to keep pace with the speed and scale at which today’s cybercrimes evolve and occur.65 Addressing this crisis effectively requires a whole-of-society approach, one that strengthens collaboration and coordination across sectors and embraces the role that emerging technologies can play in detecting suspicious activity early, tracing stolen funds, and protecting consumers in real time without distorting incentives or stifling innovative solutions.66

This shift in mindset matters. Combatting fraud and scams is no longer simply a consumer protection issue.67 It is a fight for security, resilience, and trust in the digital age. Every American is now on the frontlines of the fight. Ensuring they are not left to face it alone is more than just good or pragmatic policy: it is a national security imperative.

This article is an American Affairs online exclusive, published February 20, 2026.

Notes

1 Joe Vigil, “Las Vegas Vietnam Veteran Says He Lost $180,000 to Scammers,” Fox 5 Las Vegas, December 26, 2024.

2 Jeffrey Gottfried et al., “Online Scams and Attacks in America Today,” Pew Research Center, July 31, 2025.

3 Kevin Ozebek, “Scammers are Targeting Veterans at Alarming Rate. How to Protect the Vet in Your Life,” ABC 7, June 3, 2025.

4 Matt Bracken, “House Bill Seeks to Better Tech to Combat Financial Fraud Scams against Elderly,” CyberScoop, April 25, 2025.

5 Haiman Wong, “Cybersecurity Score – Guarding Unprotected Aging Retirees from Deception (GUARD) Act,” R Street Institute, July 21, 2025.

6 “Fraud vs. Scams, Explained,” PayPal, October 12, 2023.

7 PayPal, “Fraud vs. Scams, Explained.”

8 “Social Engineering,” Carnegie Mellon University, accessed February 2026.

9 “What to Know About Romance Scams,” Federal Trade Commission, August 2022.

10 “What are Some Common Types of Scams?,” Consumer Financial Protection Bureau, March 13, 2024.

11 “Arrest of the Confidence Man, New-York Herald, 1849,” Lost Museum Archive, accessed February 2026.

12 Lost Museum Archive, “Arrest of the Confidence Man, New-York Herald, 1849.”

13 Lost Museum Archive, “Arrest of the Confidence Man, New-York Herald, 1849.”

14 Toby Braun, “The Evolution of Fraud: New Platforms, Old Tricks,” Forbes, August 26, 2024.

15 Braun, “The Evolution of Fraud: New Platforms, Old Tricks.”

16 Braun, “The Evolution of Fraud: New Platforms, Old Tricks.”

17 Iyer, “Scams in the Digital Era: How Technology is Changing Fraud.”

18 Ugnė Zieniūtė, “Reconnaissance in Cybersecurity: Everything you Need to Know,” NordVPN, December 30, 2024.

19 Brian Joseph, “Advances in Financial Scam Tech Raises Alarms with State Legislators and Financial Sector,” LexisNexis, August 31, 2023.

20 Clara Fong and Abi McGowan, “How Myanmar Became a Global Center for Cyber Scams,” Center on Foreign Relations, May 31, 2024.

21 Fong and McGowan, “How Myanmar Became a Global Center for Cyber Scams.”

22 Fong and McGowan, “How Myanmar Became a Global Center for Cyber Scams.”

23 Fong and McGowan, “How Myanmar Became a Global Center for Cyber Scams.”

24 AJ Vicens, “Cybercrime Groups Offer Six-Figure Salaries, Bonuses, Paid Time off to Attract Talent on Dark Web,” CyberScoop, January 30, 2023.

25 Kate Fazzini, “Cybercrime Organizations Work Just Like Any Other Business: Here’s What They Do Each Day,” CNBC, May 5, 2019.

26 Sheila Burke, “How Scammers Target Victims on Social Media,” AARP, April 1, 2023.

27 Paul Wagenseil, “Cybercrime, Inc.: How the Bad Guys Adopted the Business Model,” SC Media, October 13, 2022.

28 Alistair Simmons and Justin Sherman, “Data Brokers, Elder Fraud, and Justice Department Investigations,” Lawfare, July 25, 2022.

29 Simmons and Sherman, “Data Brokers, Elder Fraud, and Justice Department Investigations.”

30 Simmons and Sherman, “Data Brokers, Elder Fraud, and Justice Department Investigations.”

31 Insikt Group, “The Business of Fraud: An Overview of How Cybercrime Gets Monetized,” Recorded Future, February 25, 2021.

32 Thomas Holt, “How Illicit Markets Fueled by Data Breaches Sell Your Personal Information to Criminals,” Conversation, June 5, 2025.

33 “Justice Department Announces Coordinated, Nationwide Actions to Combat North Korean Remote Information Technology Workers’ Illicit Revenue Generation Schemes,” U.S. Department of Justice, July 3, 2025.

34 Google Threat Intelligence Group, “Cybercrime: A Multifaceted National Security Threat,” Google Cloud, February 11, 2025.

35 Jennifer Leach, “If Someone You Cared about Paid a Scammer, Here’s How to Help,” Federal Trade Commission, April 10, 2024.

36 Leach, “If Someone You Cared about Paid a Scammer, Here’s How to Help.”

37 Matt Alderton, “How and Where to Report Scams—and Why It’s Crucial to Do So,” AARP, December 29, 2025.

38 Virginia Police Department, “Reporting Scams to Local Police: Understanding Their Role and Limits,” AARP, July 2, 2025.

39 NCSL Staff, “New Approaches in the Fight Against Consumer Fraudsters,” National Conference of State Legislatures, November 11, 2025.

40 “Fighting Fraud: Scams to Watch out For,” Congress.gov, September 9, 2024.

41 Alderton, “How and Where to Report Scams—and Why It’s Crucial to Do So.”

42 Alderton, “How and Where to Report Scams—and Why It’s Crucial to Do So.”

43 Alderton, “How and Where to Report Scams—and Why It’s Crucial to Do So.”

44 Moya Crockett, “The Secret Health Hell of Being Scammed: ‘I Felt as Though My Mind was Disintegrating’,” Guardian, October 23, 2024.

45 Caroline Melear, “Bipartisan Efforts are Underway to Tackle Financial Fraud and Scams,” R Street Institute, July 17, 2025.

46 Melear, “Bipartisan Efforts are Underway to Tackle Financial Fraud and Scams.”

47 Haiman Wong, “Cybersecurity Score – Guarding Unprotected Aging Retirees from Deception (GUARD) Act,” R Street Institute, July 21, 2025.

48 Wong, “Cybersecurity Score–Guarding Unprotected Aging Retirees from Deception (GUARD) Act.”

49 Brian Fritzsche, “Banks Are Fighting Fraud and Scams, But We Can’t Do It Alone,” Open Banker, July 10, 2025.

50 “Bank Trade Groups Call out Misleading Warren Report on Zelle Fraud,” ABA Banking Journal, October 3, 2022.

51 Kennedy Meda, “Consumers or Financial Institutions: Who Bears the Burden of Scam-Induced Fraud Losses?,” Thomson Reuters, August 13, 2024.

52 Meda, “Consumers or Financial Institutions: Who Bears the Burden of Scam-Induced Fraud Losses?.”

53 Haiman Wong and Caroline Melear, “Protecting Americans from Fraudsters and Scammers in the Age of AI,” R Street Institute, October 23, 2025.

54 Wong and Melear, “Protecting Americans from Fraudsters and Scammers in the Age of AI.”

55 Wong and Melear, “Protecting Americans from Fraudsters and Scammers in the Age of AI.”

56 Wong and Melear, “Protecting Americans from Fraudsters and Scammers in the Age of AI.”

57 Wong and Melear, “Protecting Americans from Fraudsters and Scammers in the Age of AI.”

58 Wong and Melear, “Protecting Americans from Fraudsters and Scammers in the Age of AI.”

59 Wong and Melear, “Protecting Americans from Fraudsters and Scammers in the Age of AI.”

60 “Looking Ahead at the Future of Fraud,” LexisNexis Real Solutions.

61 Patrick Cooley, “How Agentic AI Could Turbocharge Fraud,” Payments Dive, November 4, 2025.

62 Haiman Wong and Tiffany Saade, “The Rise of AI Agents: Anticipating Cybersecurity Opportunities, Risks, and the Next Frontier,” R Street Institute, May 29, 2025.

63 Kate Whiting, “Quantum Leaps: 3 Ways Banks Can Harness Next-Gen Technologies for Financial Services,” World Economic Forum, July 21, 2025.

64 Meda, “Consumers or Financial Institutions: Who Bears the Burden of Scam-Induced Fraud Losses?.”

65 Mike Puglia, “Stop Blaming the Victim: Why the Fight Against Cybercrime Needs to Change,” Infosecurity Magazine, July 4, 2025.

66 Lindsey Johnson, “Sadly, the Scammers Are Winning—But Our Government Can Help,” Hill, April 30, 2025.

67 Wong and Melear, “Protecting Americans from Fraudsters and Scammers in the Age of AI.”