2 The Supreme Court relied primarily on Bork’s argument that Congress intended the Sherman Act to advance consumer welfare in making its landmark statement in Reiter v. Sonotone Corp. (1979) that “Congress designed the Sherman Act as a ‘consumer welfare prescription.’”

Conservatives and Big Tech: The Return of the Republican Tradition

Conservatives have been on a collision course with Big Tech for some time. The removal of President Trump from major social media platforms in 2021 further inflamed preexisting concerns, and Republican deference to the Chamber of Commerce agenda on these issues can no longer be taken for granted. From censorship, to political bias, to data privacy, to child exploitation, to human trafficking and the increasingly violent online pornography epidemic—along with economic concerns regarding market concentration and anticompetitive behavior—the problems with Big Tech are complex and multifaceted.

There are, moreover, divisions among conservatives about the severity of the problems posed by Big Tech and disagreement over the right policy solutions to address these problems. With Republicans poised to take at least one house of Congress in the midterm elections, these intramural debates among conservatives are likely to take on a broader significance in the years ahead.

Three Problems

One major Republican complaint involves social media platforms’ censorship and suppression of speech, particularly of conservatives. Political and religious censorship by Big Tech companies is only increasing. It began with prominent conservative politicians being censored, or high-profile stories like the New York Post’s scoop on Hunter Biden’s laptop, but now smaller conservative organizations are also being censored. Those advocating against CRT in schools as well as religious nonprofits advocating for pro-life, pro-family policies have become targets more recently. A Napa Legal Institute report released in early 2021 found religious censorship was happening at a rate of at least one incident per week.

Social media companies in Silicon Valley, which have a clear political bias, are making choices every day about what speech is acceptable for Americans and thereby fundamentally altering democratic debate. They are shaping the public square, limiting conservatives’ ability to convey arguments to the public, and limiting society’s ability to participate in important debates. If conservatives don’t have equal access to these channels, then there is no open and fair marketplace of ideas, which in turn affects conservatives’ ability to win other important political arguments. Conservatives increasingly see holding Big Tech accountable as fundamental to all other policy battles.

Second, the harms to children and the amount of criminal content on tech platforms shows that Big Tech companies are not simply neutral, rational actors. They are often bad actors. The recent “Facebook Files” reporting by the Wall Street Journal makes clear that these companies are out for their own bottom line, not what is best for Americans or for our children and families. They are actively trying to addict children, at younger and younger ages, to their products, despite their own internal research showing how those products lead to eating disorders, depression, and other mental health problems. The Journal likewise revealed that platforms’ algorithms serve up sexual and illicit content to children, sending them down dangerous rabbit holes—but social media companies are doing nothing about it.

Social media platforms frequently host and allow criminal content, such as sex and drug trafficking, to proliferate on their platforms. They allow the very content they are legally empowered to remove as Good Samaritans by Section 2301—content that is “obscene, lewd, lascivious, filthy, excessively violent, and harassing”—while at the same time using that provision’s vague “otherwise objectionable” term to take down conservative content.

Third, the Big Tech companies’ consolidation of market power and potential violations of antitrust law are preventing start-ups or conservative alternatives from succeeding. They use their dominance to advantage their own products and create private ecosystems that are impossible for start-ups to break into. Look at what happened to Parler. Big Tech apologists often say that competitors are free to start their own platforms, and so Parler did. Then Apple and Google, gatekeepers of the app market, took it off their app stores, and Amazon canceled its web services, taking away its operating infrastructure. These actions effectively shut Parler out from being a competitor in the digital space. (On a practical note, conservatives could do a better job building alternative internet infrastructures to host conservative platforms, so they can’t be shut down. RightForge is a recent example of such efforts.)

The Inadequacy of Old Approaches

Today, the Right is engaged in a critical debate about all of these issues. Are the problems that bad? Will proposed government policies just make things worse? Won’t the market solve these problems? Others argue that conservatives rightly distrust Big Government, but that this concern should not mean turning a blind eye to Big Tech. In some respects, Big Tech is just as dangerous as Big Government. In many ways, Big Tech has even become an extension of Big Government; just look at the ideological alignment between Silicon Valley and the current administration, including the recent example of Big Tech censoring “Covid misinformation” at the behest of the Biden administration. A convincing argument has been made by the eminent Columbia Law School professor Phillip Hamburger that Section 230 has merely privatized government censorship.

Conservatives are increasingly exploring the questions of what limits should be placed on the power of Big Tech. As Ryan Anderson has observed, “absolutism about market freedoms is untenable. This is not to downplay the importance of economic freedom and property rights but to say while those freedoms are important, so too are other things. The common good is multifaceted, and granting Big Tech unlimited liberties is not how to best protect human flourishing and human dignity.”

Much of the frustration with Big Tech today stems from the fact that two of the main tools for holding private businesses accountable and ensuring that they are not harming consumers or the public good have essentially been blocked when it comes to the largest internet companies.

The first is litigation. Typically, when private businesses cause harm to consumers they are sued and held accountable through the judicial system. Yet under Section 230—or rather the bad precedent that has overly expanded that statute’s interpretation—the internet companies have enjoyed broad immunity from liability. The normal path of litigation for holding Big Tech accountable has been blocked.

The second traditional means for accountability is antitrust enforcement. When a merger or acquisition would prove harmful to consumer welfare in a particular market, it is supposed to be blocked. When it comes to Big Tech, however, the traditional metric for measuring consumer harm—price—has been difficult to apply since many products and services are nominally free. The Big Tech companies operate in digital, zero-price markets, and this new dynamic has inhibited sufficient antitrust enforcement against these companies.

To address these challenges, three main policy tools have emerged, which form the basis of debate on the right today.

Reforming Section 230

Currently tech companies are able to hide behind the vague language of “otherwise objectionable” in Section 230 to remove political content they find offensive or disagree with, while avoiding liability for hosting criminal content. This situation has led to broad agreement among lawmakers that Section 230 needs to be reformed to realign that law with its original intentions.

Justice Clarence Thomas, in his statement in the Malwarebytes v. Enigma Software case, summarizes how Section 230 has gone wrong. Thomas argues that Section 230 was meant to make internet companies immune from only publisher liability, not from publisher and distributor liability. The Zeran v. America Online (1997) case, which other courts unfortunately have followed and expanded upon, incorrectly eliminated distributor liability for platforms, conferring 230 immunity even when an internet company distributes content that it knows is illegal. Thomas also explains that 230 has been expanded to give publisher liability to internet companies even when the providers have developed the content in part. Now platforms receive protection for traditional publisher functions like editing or removing content, adding commentary, and so forth, which Thomas argues has eviscerated the narrow liability shield in Section 230, namely the specific protections Congress gave for removing certain enumerated content in provision (c) (2). The current interpretation by the courts means that Section 230(c)(1), which excludes platforms from being treated as publishers or speakers, swallows up the specific immunity granted in (c)(2). Lastly, Section 230 has been extended to protect companies from product defect claims for the defendant’s own misconduct in design flaws. Thus, Section 230 has given blanket immunity to internet platforms, to the point where the prevailing interpretation is now immunizing tech companies from even their own wrongdoing.

Congressional Republicans have introduced two prominent reform proposals. One is Senator Marco Rubio’s discourse Act, which takes away immunity for platforms if they engage in three behaviors: algorithmic amplification, content moderation (promoting or suppressing a particular viewpoint), and information creation/development (modifying, commenting on content). Another is Representative Greg Steube’s case-it Act, which would make Section 230 immunity conditional for market-dominant Big Tech companies: in order to receive the special government protection, these platforms would be required to adhere to a First Amendment standard in their content moderation practices, and they would not receive immunity if they are facilitating the distribution of illicit, obscene, exploitative, or harmful content to minors.

The Department of Justice’s legislative proposal on Section 230 reform released by Attorney General Barr back in 2019 (I was working in his office at the time) also contains model language that the Right may look to and which would address the issue of 230 conferring immunity from distributor liability. The proposal removed protections for “bad Samaritans” that willingly allow criminal content to be distributed on their platforms. It also sought to clarify the vague term “otherwise objectionable” that platforms have been hiding behind to remove conservative content.

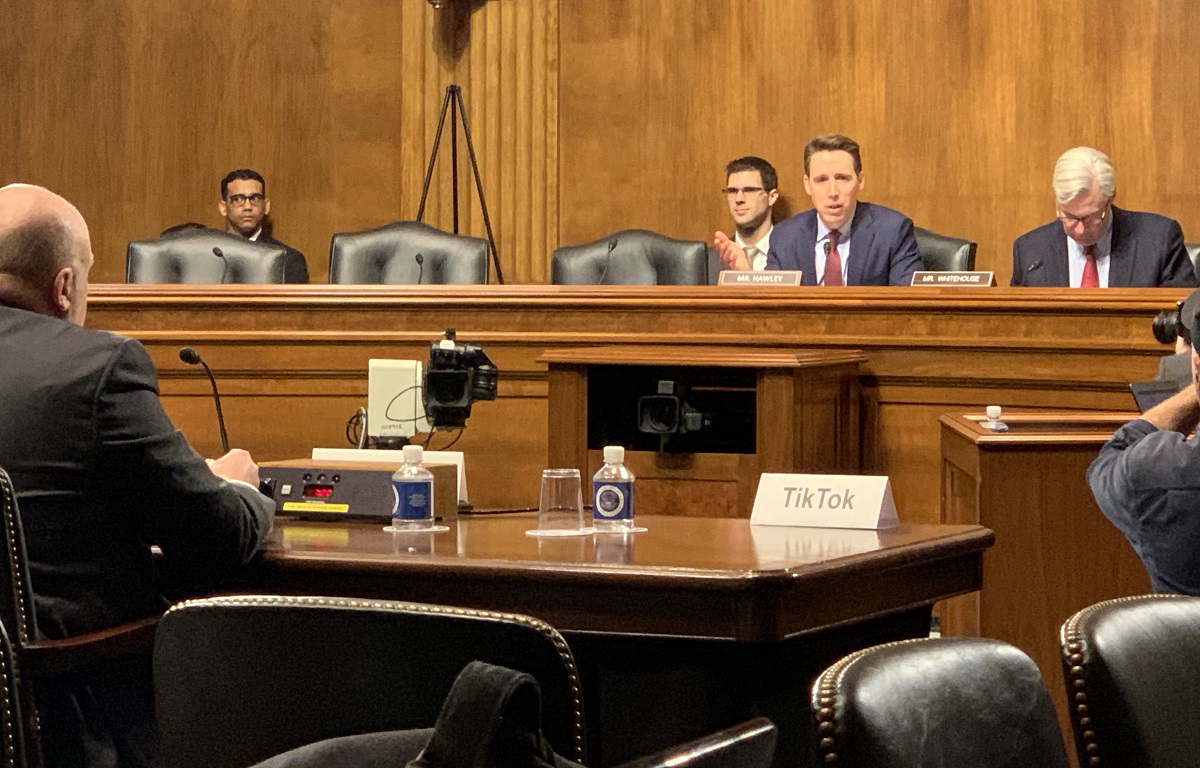

In addition, Representative Cathy McMorris Rodgers is heading up a Big Tech Accountability Platform for the House Energy and Commerce Republicans that is expected to include a number of Section 230 reform bills targeting different issues in the near future. Senators Josh Hawley, Mike Lee, Lindsey Graham, and Bill Hagerty, plus others in the House like Representative Jim Jordan, have all introduced their own Section 230 bills in this Congress or in the past.

The challenge, however, is that Republicans have been unable to reach a consensus and rally behind one reform proposal. Thus, it seems unlikely Congress will be able to pass a meaningful reform anytime soon. In addition to the bills on the right, Democrats have introduced bills of their own with a different aim and vision. Their focus is on holding platforms accountable for promoting misinformation and disinformation. Finally, there are forces on the “right” actively opposing any type of Section 230 reform.

Certain libertarian think tanks as well as industry trade groups have been actively arguing against any Section 230 modifications. They argue that Section 230 reforms would force the platforms to host speech which they and their community of users find objectionable. As a report by the Cato Institute argues, removing or significantly modifying Section 230 would once again force platforms to face the “moderator’s dilemma,” a situation that existed before Section 230 was enacted. Platforms would be forced to choose between two equally unattractive alternatives: They could attempt to minimize their liability by scrutinizing every user post for its potential risks (likely delaying all posts for substantial periods of time, and significantly increasing the cost of operating their site). Or they could engage in no moderation whatsoever, in which case the pre–Section 230 common law established that they would not be liable for their users’ content—but in which case, also, their users’ experience would be badly degraded. The argument holds that if Section 230 is reformed, the internet will become a cesspool of pornographic and violent, disturbing content.

These claims, however, tend to ignore the fact that most conservative reform proposals do not call for a blanket repeal. In particular, most leave in place the protections for taking down “obscene, lewd, lascivious” content and, if anything, the reforms seek to further restrict illegal content by removing Section 230 protections for bad Samaritans who knowingly allow such content to proliferate.

The Cato report also warns that reforms would lead platforms to become more restrictive of user-generated content, negatively affecting many more people than those currently being affected by censorship. If Section 230 were significantly narrowed, the authors say, platforms would face more litigation and its accompanying costs for content moderation decisions. “Given the volume of user-created content hosted by even modest-sized platforms, newer and smaller internet services would likely face far more litigation around their content moderation decisions than they would be able to afford. These significant, unrecoverable costs would impair the ability of new platforms to compete.”

Arguments about protecting start-ups have gained traction and led to agreement that repealing Section 230 completely, without replacing it, would not be the right response for conservatives. As Klon Kitchen, currently of AEI, has written in the past (when at Heritage), “Mend It, Don’t End It.” Conservatives do not want platforms to have publisher liability for all third-party content hosted on their sites. Section 230 thus does serve an important function in protecting companies, especially start-ups, so they can serve as a conduit for others’ speech. Incentivizing more right-leaning start-ups in the tech space, which would likely have a greater commitment to free speech, could help provide viable alternatives to Big Tech.

There is also increasing recognition that a key issue with Section 230 is the immunity it gives social media platforms for their own algorithms. The push to open tech platforms up to liability for their algorithms, for amplifying certain kinds of content and suppressing others, has gained the most momentum in Congress this past year on the right and left. Currently, platforms have broad immunity for any content moderation, including their use of algorithms. But these algorithms essentially make the platforms more like publishers than neutral hosts, as Justice Thomas explained, because companies are receiving immunity for traditional publisher editorial functions. With infinite third-party content to work with, platforms can choose to suppress or amplify certain material to essentially communicate the messages they want to communicate. Thus, through their algorithms, they are essentially “speaking” and acting as publishers, but aren’t liable for editorial decisions being made by the algorithms they create and design. This may be the most promising area to find agreement on reform.

It is also worth noting that there have been genuine bipartisan efforts to address social media’s harms to children, and it is possible that a bipartisan bill to address these harms through a narrow Section 230 reform could pass. For example, the bipartisan earn-it Act has now passed out of the Senate Judiciary Committee. The bill would carve federal and state child sexual abuse material (CSAM) laws out of Section 230, so that if victims sued under those CSAM laws, Section 230 would no longer affect their lawsuits. It follows the precedent of harm-motivated reform set by fosta-sesta that passed into law in 2018, which amended Section 230 to protect against online sex trafficking.

While waiting on Congress to act, the courts could resolve many of Section 230’s current issues by correcting its overly broad interpretation, a review which is long overdue. The Supreme Court could overturn the current precedent that has expanded 230’s immunity protections beyond the original intent of the law. While the Supreme Court recently denied, for procedural reasons, a petition for writ of certiorari filed in the case of Jane Doe v. Facebook, in which the petitioner was sex trafficked as a minor because Facebook’s products connected her with a sex trafficker, Justice Thomas wrote that the Court should, however, “address the proper scope of immunity under §230 in an appropriate case.”

Antitrust Law

Addressing Big Tech companies’ abuse of market dominance and potential violations of antitrust law could be achieved by more aggressive enforcement of existing antitrust laws in these digital markets and/or by updating our antitrust laws where needed to address certain market failures. The current, dominant antitrust approach is that of the Chicago School, which uses the consumer welfare standard articulated by Judge Robert Bork in his 1978 book The Antitrust Paradox as the standard for evaluating whether a company has abused its monopoly power and violated antitrust law.2 This standard has mainly used the metric of price to measure whether there is harm to the consumer and thus monopolistic behavior. The challenge, though, is that Big Tech’s services are “free,” and instead consumers pay for the service with their personal data, time, and attention, and these companies then make profits from advertising revenue. The longer a customer stays engaged on internet platforms, the more ad revenue the platforms make, and they also take the data collected from users and sell it to advertisers, allowing advertisers to better “target” their ads to consumers.

Thus, these companies operate in a “two-sided” market, on one side selling a “free” service to consumers and on the other side selling advertising space to advertisers. The big questions looming over antitrust law today are: How can antitrust enforcers adapt and effectively apply the current consumer welfare standard to these digital markets? Can enforcers measure the harm (increasing cost) to consumers other than by using price as the metric, such as using time or attention? Is there a way to treat censorship as a consumer harm, as a decrease in the quality of the good you are “purchasing”? (In antitrust law, there is a category for measuring quality as an adjusted price.) Or is the key to really look for monopoly behavior on the other side of the screen, the advertising market, where the traditional metric of price can still be used in assessing the prices charged to advertisers?

Any antitrust lawyer will tell you that the most important aspect of an antitrust case is market definition; a case rises and falls depending on how the market is defined. For example, in the FTC’s complaint against Facebook for antitrust violations, it chose to go after Facebook for its dominance in the social networking market, but it was originally unsuccessful. When the FTC refiled its complaint in August of last year, many observers thought they should instead go after Facebook in the digital advertising market. Evidence shows that Google is clearly a monopoly in the advertising market, with Facebook as a close runner-up—a so-called “digital duopoly.” The FTC chose, however, to stay in the social networking market and provide more specific evidence to prove Facebook’s control over that market. It is unclear how that will unfold, but the takeaway is that digital markets are complex.

Another issue with regard to Big Tech is that certain acquisitions may not have violated current antitrust laws, but they have allowed companies to create private ecosystems for their products, which then greatly raises the switching costs to consumers and makes it nearly impossible for start-ups to break in. Take, for example, Google buying YouTube. Or Facebook buying Instagram and WhatsApp. While these mergers may not have violated antitrust laws, they have drastically increased the barriers to entry for competitors. Thus, many in Congress are now calling for updates to our antitrust laws to solve these market failures.

As Senator Tom Cotton recently wrote in an opinion piece for Fox News:

These companies have acquired this extraordinary level of power, in part, by consuming and combining with their major competitors. Google bought YouTube, Facebook acquired Instagram and WhatsApp, and Apple bought Intel’s smartphone business. These companies, once spritely startups themselves, know the potentially disruptive effects of new firms—and they will snuff out burgeoning new competitors whenever and wherever they can. . . . Technology companies are not inherently evil or malicious, and it is natural for successful businesses to continue to expand. However, when any set of corporations reaches this level of power and engages in such pervasive anti-competitive behavior, it poses a threat to the free market system.

Given these threats, some lawmakers are now arguing that it is time to abandon the consumer welfare standard and return to a Brandeisian approach to antitrust with a focus on preventing any one company from acquiring too much power over the economy. Currently, this “trustbusting” approach is being advanced by Senator Josh Hawley, who believes that antitrust law should not rely on consumer welfare alone, and that there are important political choices that must underpin the economics. This school believes that excessive concentration is not just an economic threat but a threat to democratic values. At a certain size, companies become so powerful that they threaten our system of government.

The consumer welfare standard moved antitrust law away from such political decisions, making consumer economics the primary or perhaps only standard of analysis. Hawley and others are hearkening back to an older antitrust tradition in American history, which holds that narrow consumer welfare analyses do not always reflect the common good and that the free market does not always result in what is best for our democratic republic.

Historically, Americans conceived of republican liberty as requiring not just a particular kind of government but also a particular kind of society and economy. They did not believe that oligarchy and economic concentration was a natural development but a political choice. Theodore Roosevelt, in particular, saw that excessive concentrations of wealth and power were poisonous to the republic. And this republican tradition linked liberty to participation in self-government. Roosevelt thus saw that trustbusting against the robber barons of his era was necessary to maintaining and defending a “republic of the common man,” in which the government is not controlled by a concentrated power or by oligarchic corporations, but rather responsible to the common citizen.

Antitrust policy has been at the center of attention recently due to several proposed bills on the Hill. Notably, six bills passed out of the House Judiciary Committee (HJC) last year and are now awaiting a floor vote in the House. Of those, most also have Senate companion bills introduced. There have been other bills introduced by Republicans as well, ranging from Senator Mike Lee’s TEAM Act, which seeks to codify the consumer welfare standard and give the Department of Justice exclusive antitrust enforcement authority, to Senator Hawley’s Trust-Busting for the 21st Century Act, which bans all mergers and acquisitions by companies with a market capitalization exceeding $100 billion.

While there are a lot of different approaches being put forward, it seems that most on the right seek a narrower approach than Senator Hawley’s trustbusting. Most attention has been focused on the bipartisan American Innovation and Choice Online Act (aicoa), introduced by Senators Grassley, Kennedy, and Klobuchar. The bill would prohibit dominant platforms from favoring their own products or services and prohibit specific forms of conduct that are harmful to small businesses, entrepreneurs, and consumers, like preventing another business’s product or service from interoperating with the dominant platform or requiring a business to buy a dominant platform’s goods or services in order to get preferred placement on its platform. The Senate Judiciary Committee advanced it earlier this year and it now awaits a floor vote. It is unclear whether it has the sixty votes needed to pass the Senate (if it does get brought to a vote). While some Republicans have supported it, others like Senator Lee have voiced concerns that it would lead to unintended consequences that would harm consumers and grant broad powers to the FTC.

And while there have been bipartisan voices encouraging Congress to bring the aicoa to a floor vote in the House and Senate, opposition to it has also been increasing. The same groups that oppose Section 230 reform also argue against antitrust reforms on the grounds that such reforms will threaten our national security and harm consumers. Matthew Feeney at Cato argues that by prohibiting Big Tech companies from “self‐preferencing” their products on their own platforms, aicoa would have a chilling effect on services and products that are good for consumers.

Klon Kitchen at AEI argues that the antitrust reforms being proposed will harm our ability to compete with China and endanger national security. He is concerned that requirements for interoperability and sideloading could degrade user cybersecurity and that hamstringing large American tech companies with these reforms will weaken our warfighting capabilities in the long run. Likewise, twelve former national security officials wrote a letter to Congress stating that antitrust measures risk undermining America’s advantage vis-à-vis China. They contend that such measures would impede U.S. companies and reduce their ability to pursue innovation by degrading critical R&D priorities, which would allow foreign competitors to displace leaders in the U.S. tech sector. All twelve signatories, however, have ties to major tech companies, either working with them directly or serving with organizations that raise money from them, according to a Politico analysis.

In an opinion piece for the Federalist, Senator Lee pushed back against these arguments, writing,

competition in Big Tech doesn’t threaten America, it threatens the monopolists—and that makes America stronger. Insulating American companies from competition out of a fear of foreign competitors will do the opposite of what Big Tech claims to want: we will be stuck with stagnant monopolists too complacent either to benefit American consumers or to protect us from foreign threats. In fact, it is Big Tech companies themselves that pose the greatest threat when it comes to China. They not only can’t protect us from foreign threats, but in some cases actively cooperate with them.

Lee names just a few of these corporations: Google has acknowledged developing a filtered version of its search engine to satisfy Chinese censors. The New York Times revealed earlier this year that Apple has stored data on Chinese government servers, shared customer data with the Chinese government, removed apps from its App Store to appease the Chinese government, and banned apps from a critic of the Chinese Communist Party. Facebook has admitted to sharing data with Chinese state-owned companies.

Furthermore, national security objections to date have ignored the reality that Big Tech companies are so large that they are actually diverting talent away from other crucial industries, like infrastructure and military hardware, for the sake of inconsequential projects for these platforms, like developing new emojis. Moreover, R&D investment has been shrinking at many of these companies already, because they have little incentive to undertake risky investment in innovation when they can wield their market dominance to squash or buy up the competition.

Reforms can also be narrowly targeted. There are a few bipartisan bills that offer solutions to address specific market failures. The Open App Markets Act (OAMA), introduced by Senators Marsha Blackburn, Richard Blumenthal, and Amy Klobuchar, has now passed out of the Senate Judiciary Committee. OAMA is not an overarching antitrust bill, but seeks to open up competition in the app store market. If passed, it would prevent companies from requiring developers to use their in-app payment systems in order to distribute apps in their app stores, and it would require app store operating systems to allow users to install third-party apps or app stores. The bill offers a good model of narrow reform to correct a specific market failure in the Big Tech space.

Another targeted bipartisan bill seeks to address competition in the news market. The Journalism Competition and Preservation Act (JCPA), also known as the “Safe Harbor Bill,” would pause antitrust restrictions for four years to let publishers unite to negotiate with Facebook and Google for fair compensation for news content. Currently, no news company is in a position to negotiate with Google by itself.

Finally, Senator Lee recently introduced the Competition and Transparency in Digital Advertising Act, joined by Senators Klobuchar, Blumenthal, and Cruz. The bill aims to restore and protect competition in digital advertising by eliminating conflicts of interest that have allowed the leading platforms in the market to manipulate ad auctions and impose monopoly rents on a broad swath of the American economy.

Some conservatives have been looking to antitrust reform to also solve censorship issues. While antitrust does not directly address this problem, it could certainly help, since monopolies are not just distorting the free market, they are distorting the free exchange of ideas. Tech monopolies have the ability to wield massive power over the flow of information in our country today. The reason they can censor effectively is because there are no credible alternatives to the major communication channels they operate. In this regard, increasing competition could provide a longer-term answer to censorship, allowing alternative channels to effectively enter and compete in the market. But even if existing conglomerates are broken up and future mergers are prevented from forming, there is still a form of ideological homogeneity among those in Silicon Valley who run these companies. Again, this is why more conservative start-ups are needed, and opening up competition in these markets could certainly help with that.

One important final caution with antitrust reform is that conservatives are and should be wary of giving too much power and discretion to the Federal Trade Commission (FTC), an insulated, independent agency. Congress ought to be very prescriptive with any antitrust reforms it passes and not allow a politically unaccountable agency to determine how antitrust laws are updated. The Left will certainly try to circumvent Congress and the courts by rulemaking through the FTC. And so Republicans who may not be comfortable supporting the current bipartisan bills introduced, because they empower federal agencies, must be prepared to offer up alternative antitrust bills that address these issues. This is especially true for Republicans on the House and Senate Judiciary Committees when Republicans take back Congress.

Common Carrier Law

The third and final policy tool currently being explored is common carrier law, specifically the nondiscrimination provisions found in this body of law. Common carrier law has received a lot of attention recently due to Justice Clarence Thomas’s concurring opinion in Biden v. Knight First Amendment Institute in April 2021, which broached the possibility that internet platforms ought to be treated as common carriers. I recently coauthored a Wall Street Journal essay with Columbia Law School professor Phillip Hamburger that argued for the same, and a social media law that Texas passed last year takes a similar approach.

Common carriage refers to a diffuse set of legal rules that for centuries has ensured that basic economic, transportation, and communication channels are open and accessible to all members of society. It reflects a practical approach to the issue of market dominance in industries that provide public goods. Under common carriage, the government gives dominant firms certain privileges, such as immunity from antitrust laws or tort liability, and in exchange these firms have an obligation to provide their goods and serve all comers in a nondiscriminatory manner. In this way, common carrier regulation operates as a classic carrots-and-sticks “regulatory bargain.”

When a company offers its services to the public, it can qualify as a common carrier in one of two ways. It can serve a public function, so that even a small bus company can be treated as a common carrier. Or it can enjoy market dominance—when the services of one or a few companies are so prevalent as to leave the public with little alternative. The large tech companies meet both definitions. Digital platforms that hold themselves out to the public now resemble other traditional common carriers, such as telephone and cable companies, providing universal communications platforms. In a way, Big Tech is already subject to common carrier regulation because Section 230 recognizes the tech companies as akin to common carriers. These platforms already receive the first half of the common carriage regulatory bargain: Section 230 immunity protections from certain suits related to third-party content and content moderation decisions, i.e., from traditional publisher liability. But with the emergence of dominant social media platforms, the absence of a stick to ensure the free and democratic provision of this public good has become increasingly apparent.

The solution would be to legislatively impose common carrier’s nondiscrimination provisions on Big Tech companies, specifically social media platforms, to ensure that all comers have equal access to these platforms. This can be done at the federal or state level. One federal bill, the 21st Century FREE Speech Act, was introduced by Senator Bill Hagerty last year. It is, however, unlikely to pass anytime soon, so this type of regulation will probably have to come from the states.

The state of Texas passed an antidiscrimination social media law (HB 20) last September, drawing on common carrier law to prohibit social media companies from viewpoint discrimination. The law prohibits social media platforms with more than fifty million active monthly users in the United States from censoring users or their expressions based on the viewpoint expressed. Along with explicitly prohibiting viewpoint discrimination by social media companies, the law enables censored users to seek declaratory and injunctive relief in court. Texas can lawfully regulate social media by treating these platforms as common carriers. Communications networks have always operated under these nondiscrimination requirements. The Texas social media law simply applies these historical precedents to the modern public square—social media platforms. And other states, like Georgia and Ohio, have also introduced and are considering similar bills.

Unfortunately, the Texas law was enjoined by an Obama-appointed district court judge last December. The U.S Court of Appeals for the Fifth Circuit subsequently lifted the stay, allowing the law to go into effect, but the Big Tech platforms, represented by their trade group NetChoice, made an emergency appeal to the U.S. Supreme Court, which the Supreme Court granted 5–4. The law is now effectively blocked from taking effect until the Fifth Circuit makes a final ruling on the merits of the law.

While litigation has been proceeding over the Texas law, an Ohio state judge recognized that Ohio “has stated a cognizable claim . . . that Google Search is a common carrier,” and rejected Google’s motion to dismiss the Ohio attorney general’s lawsuit (the Ohio attorney general had brought a lawsuit asking the Ohio state court to declare Google a common carrier). The Ohio state court provided a scholarly and measured appraisal of common carrier law, recognizing that “Google’s search function, as a private business, [could affect] the public concern to such an extent that it should be declared a common carrier.”

Opposing common carrier treatment, Big Tech, represented by NetChoice, has argued that platforms’ content-moderation decisions—i.e., their decisions to remove or deprioritize posts or deplatform users, and thereby curate the material they disseminate—are “editorial judgments” that are protected by the First Amendment under long-standing Supreme Court precedent. They state that when a platform selectively removes what it perceives to be incendiary political rhetoric, pornographic content, or public health misinformation, it conveys a message and thereby engages in “speech” within the meaning of the First Amendment.

Yet defending social media’s discriminatory censorship as “editorial discretion” that expresses a coherent “message” worthy of First Amendment protection, like a newspaper op-ed page or a parade, seems to be in direct conflict with how these companies simultaneously insist that they are not publishers or editors and cling to their immunity from publisher liability under Section 230. How can platforms engage in content moderation that conveys a message and constitutes “speech” that is protected by the First Amendment but at the same time not be held liable for those editorial decisions, because Section 230 gives them immunity from publisher liability?

Unlike a newspaper editor or parade organizer, however, social media companies do not review all content they host; they review only a tiny fraction. A newspaper op-ed page or parade expresses the judgment of its editors and organizers with every article or marcher it includes, as well as with the newspaper or parade as a whole. By necessity, a newspaper or parade, given its limited size, exercises powerful editorial control over its content.

In contrast, a social media firm is a passive conduit. It rarely edits, and its infinite bandwidth gives it no need to edit. Moreover, how can platforms express themselves in the billions of posts they cannot review? Or how can the platforms’ inconsistent, and often hidden, acts of content moderation constitute a coherent “message,” let alone an expression worthy of First Amendment protection? Finally, common carriage requirements prohibiting platforms’ discriminatory censorship of others in no way limit or burden the platform’s own speech, because social media platforms are free to tweet or post as much as they’d like.

Updating COPPA to Protect Kids

Finally, in addition to these three major tools, it is worth noting that Congress could also amend the Children’s Online Privacy Protection Act (coppa) of 1998 to make this law much more effective at protecting children online today from the harms of Big Tech, as I explained previously in National Review. A few key reforms would make a big difference, like raising the age of a “minor” to eighteen years old and lowering platforms’ liability standard from “actual knowledge,” meaning the platform had to have specific and certain knowledge that unauthorized, underaged individuals were using its platform (the highest liability standard in tort) to “constructive knowledge,” which would make platforms responsible for what they “should reasonably know” given the nature of their business and the information they already collect from their users.

Congress could also enhance enforcement by eliminating coppa’s preemption provision so that states could bring torts alleging invasion of privacy. Additionally, it could create a private cause of action under the law so that parents could bring suits against platforms for violations of children’s privacy.

The Republican Tradition and Republican Legislation

The various problems presented by Big Tech have reminded conservatives that the historic republican tradition not only sought to protect Americans from the intrusion of Big Government but also from Big Business, from having a republic run by oligarchs rather than the common man. There are cases when the free market will not solve political problems, and government intervention is needed to ensure the best outcomes for human flourishing and the common good. Absolutism about market freedoms has become untenable for a growing number of conservatives.

But in the near future, it seems increasingly unlikely that either of the two main bipartisan Big Tech bills being pushed in the Senate currently, the American Choice and Innovation Online Act and Open App Markets Act, will be brought to a floor vote before the midterms. If they don’t receive a vote soon, the aicoa, along with the other bipartisan antitrust bills that passed out of House Judiciary last year, probably won’t go anywhere in a Republican Congress. Moreover, a Republican Congress is also not likely to prioritize broad antitrust efforts, as many Republicans still appear wary of this approach for addressing Big Tech. The more targeted approaches of OAMA or Senator Lee’s Digital Advertising Act, however, could stand a chance in the next Congress. And Senators Blackburn and Lee will continue to be leaders for Republicans in the Senate on Big Tech issues.

Regarding Section 230, a Republican Congress could pass a reform bill. Nevertheless, it will take a lot of work to reach consensus and Republicans will have to be willing to make compromises on their ideal proposals in order to get to a widely agreed-upon bill. There are a few concerted efforts underway currently on this front, from both the House Energy and Commerce Republicans led by Representative Cathy McMorris Rodgers and the Republican Study Committee led by Representative Jim Banks. McMorris Rodgers and Banks are likely to be leaders in the House on Big Tech issues after the midterms, particularly with regard to Republican efforts on a Section 230 bill.

Any Section 230 reform bill they are able to pass, however, will likely not become law until a Republican is back in the White House. But nonetheless it is important that Republicans use these next two years to prepare a Section 230 bill for when they do retake the executive.

Regarding common carriage, for the time being this policy approach will be advanced by the states. The Texas law is a good model and the Fifth Circuit will likely uphold it. I expect a few other red states will be bold enough to pass similar common carrier laws in the next year. Big Tech, however, won’t go away without a fight, so we can expect the Texas law to ultimately be appealed up to the Supreme Court. Justices Thomas and Alito at least seem willing to uphold such an approach as constitutional.

The growing bipartisan agreement on harms to kids seems durable and is likely the most viable bipartisan path forward in the near term for Congress on Big Tech. Senators Blackburn and Blumenthal introduced the Kids Online Safety Act (KOSA) this past year and it advanced out of the Senate Commerce Committee with a resounding 28–0 vote. The strong bipartisan consensus signals the potential for further bipartisan efforts to protect kids online. KOSA will hopefully make it to a floor vote soon.

Many challenges, though, certainly lie ahead for conservatives in addressing the problems posed by Big Tech to our free speech, our democratic republic, our economy and free market, and to our children and families. But we have tools available to us. We need not overhaul our system of government or rule of law to counter Big Tech; we can use existing resources in our tool kit, legal solutions that have been deployed in the past to address other market failures. The policy battles over how to deploy these tools effectively will be difficult, but conservatives must engage in them, and fight to build consensus, or otherwise allow the American republic to succumb to the oligarchy of Big Tech. And while advocating for good legislation at the federal level, conservatives should not neglect state legislative efforts or the courts as other avenues for making meaningful progress.